You have not yet added any article to your bookmarks!

Join 10k+ people to get notified about new posts, news and tips.

Do not worry we don't spam!

Post by : Anis Farhan

Artificial Intelligence (AI) is no longer just powering virtual assistants or recommendation engines. It's now influencing legal systems, defense strategies, public surveillance, job markets, and even elections. With such rapid integration into the very fabric of modern societies, there’s a growing chorus of voices—from tech experts to policymakers—raising a critical question: Are we regulating AI fast enough?

The speed of AI advancement is outpacing legislation. Systems that generate content, diagnose diseases, and predict human behavior are already impacting millions. But without proper guardrails, AI could lead to data misuse, embedded biases, job displacement, and even the accidental reinforcement of harmful ideologies. Facial recognition, for instance, is used by governments in ways that can violate privacy, disproportionately affect marginalized communities, and erode civil liberties.

Moreover, autonomous AI systems—like self-driving cars or predictive policing algorithms—raise questions about responsibility when something goes wrong. Who is accountable: the creator, the user, or the machine?

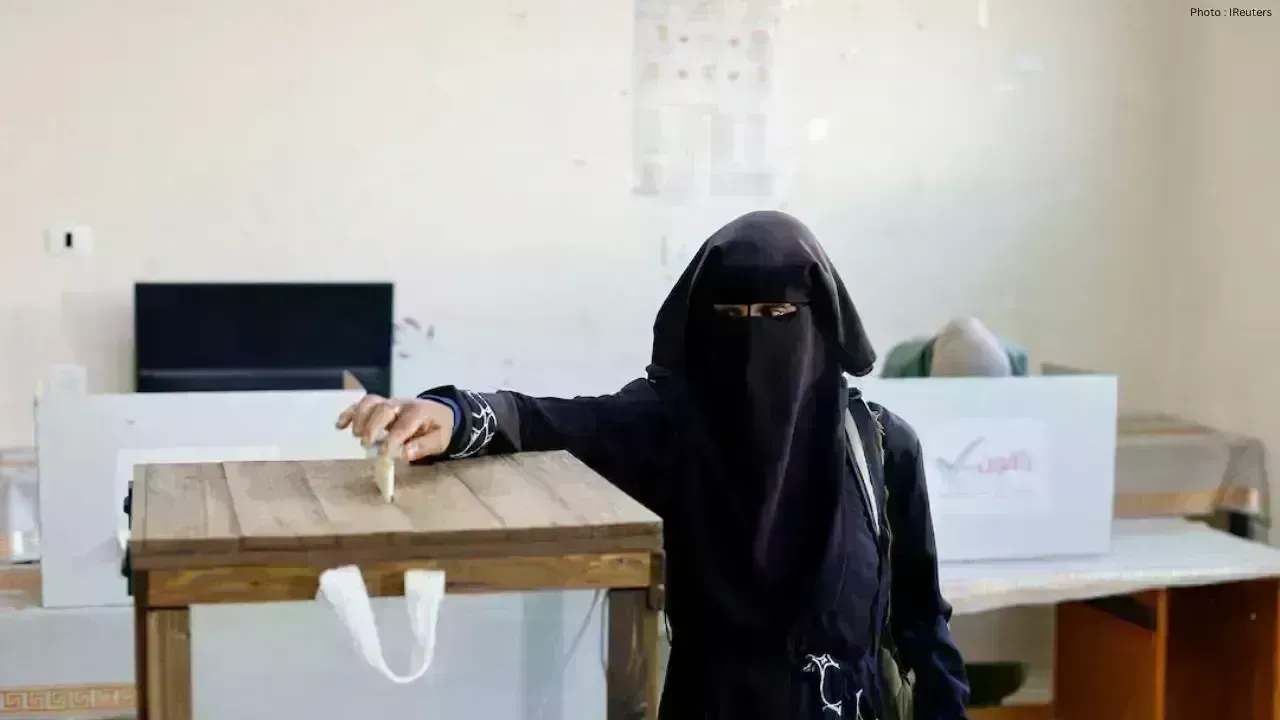

While global concern is shared, regulatory responses differ dramatically across regions. The European Union has taken a leadership position with its AI Act, which classifies AI systems into risk categories, enforcing stricter rules on high-risk applications. It emphasizes transparency, data governance, and human oversight. Meanwhile, the United States is adopting a lighter approach, focusing more on innovation than tight restrictions, though discussions are intensifying with growing concern around election interference and AI-generated misinformation.

In contrast, China is balancing strict oversight with its tech ambitions. The country has introduced rules that require companies to disclose how their algorithms work and ensure they align with socialist values. While these rules focus on control and ideological alignment, they also show a recognition of AI’s power.

One of the biggest challenges is that regulation lags where innovation happens fastest—inside private tech giants. Corporations like OpenAI, Google, Meta, and Amazon hold unprecedented influence over the development and deployment of AI tools. While some companies have initiated internal ethical boards and AI guidelines, self-regulation has limits.

These entities often have conflicting incentives: the drive for profit versus the need for responsible development. Without formal legislation, the ethical deployment of AI becomes optional, not mandatory. This vacuum allows for corner-cutting, data hoarding, and proprietary secrecy that can result in serious public consequences.

Unlike issues such as climate change, where global treaties are at least attempted, AI regulation is hindered by vastly different political systems, legal structures, and economic goals. A universal framework might sound ideal, but countries often have competing visions for AI—some focused on freedom and transparency, others on control and power.

Additionally, technological sovereignty is becoming a geopolitical asset. Countries are racing to become AI superpowers, reluctant to share algorithms, data access, or best practices that could tip the global balance.

Even if laws are passed, the core question of ethics remains. Should AI be allowed to mimic humans so closely that it’s indistinguishable? Should employers be allowed to use AI to monitor productivity and behavior in real time? Should AI-generated deepfakes be criminalized, even if used for satire or parody?

And what about AI in education, healthcare, or justice systems? Biases within algorithms have already shown how predictions can reinforce racial or gender disparities, leading to unjust outcomes in everything from loan approvals to prison sentencing.

Despite AI being a buzzword, public understanding of how these systems work—or how they’re used—is alarmingly limited. Most users interact with AI through convenience-driven features like autocorrect or shopping suggestions. But behind the scenes, vast amounts of data are being harvested, analyzed, and used to predict or influence behavior.

This lack of awareness limits democratic participation in regulation. If people don’t understand what’s at stake, they can’t pressure governments or companies to act responsibly.

A surprising turn in the global discourse is the question of machine rights. As generative AI becomes more sophisticated and autonomous agents begin making decisions without human prompts, ethicists have started debating whether we owe some level of protection or “rights” to machines.

It sounds futuristic, even absurd—but the fact that we’re already asking these questions highlights how fast the conversation is evolving.

Several countries are attempting piecemeal efforts:

Canada has proposed its Artificial Intelligence and Data Act, which aims to prevent harmful AI use in high-impact areas.

India has announced its intent to regulate AI with a focus on inclusion and innovation but hasn't finalized any formal laws yet.

Japan is leaning toward flexible rules to promote investment while managing risks through voluntary frameworks.

These actions are steps forward, but there's still no central governing mechanism to unify or enforce global norms.

One emerging idea is the creation of a global AI regulatory body, similar to how we have the International Atomic Energy Agency or the World Health Organization. Such a body could facilitate best practices, mediate disputes, and advise countries on ethical and technical standards. But getting sovereign nations to agree on terms, data sharing, and enforcement mechanisms is a long road ahead.

Until then, regional alliances like the G7 AI Code of Conduct and OECD AI Principles might pave the way toward collective understanding, even if non-binding.

While regulation might take time, individuals can already take action:

Be mindful of apps and platforms that collect personal data.

Question AI-generated content—especially news, reviews, and media.

Support brands and organizations that commit to ethical AI development.

Educate yourself on basic AI mechanisms—understanding algorithms empowers you to resist manipulation.

AI is not a future problem—it’s a now problem. It’s already writing stories, grading tests, scanning job applications, driving cars, and predicting consumer behavior. Without robust regulation, we risk entrenching systemic inequalities, eroding privacy, and handing control to entities that may not act in public interest.

Governments must act fast, but responsibly. The window for shaping AI into a force for good is open now—but it may not stay open for long.

The views and opinions expressed in this article are those of the author and do not necessarily reflect the official policy or position of Newsible Asia. The content provided is for general informational purposes only and should not be considered as professional advice. Readers are encouraged to seek independent counsel before making any decisions based on this material.

Strasbourg Mosque Project Sparks Debate in France

Large-scale mosque under construction raises questions over foreign funding, transparency, and role

US Moves to Curb China AI Model Exploitation

Trump administration targets alleged misuse of American AI systems by Chinese firms as tech rivalry

Lakers, Celtics, Spurs Win in NBA Playoffs

LeBron leads Lakers comeback, Celtics and Spurs secure crucial wins as playoff battles intensify acr

Kyrgyzstan Reviews Key Highway, Railway Projects

Officials discuss progress on Barskoon-Bedel highway and China-Kyrgyzstan-Uzbekistan railway, focusi

Vietnam PM Prioritizes Education Sector Growth

Government pushes reforms, digital transformation, teacher support, and unified textbooks while addr

Thailand Strengthens Foreign Policy and Security Strategy

Government focuses on China ties, border security reforms, MOU 44 cancellation, and new southern bor